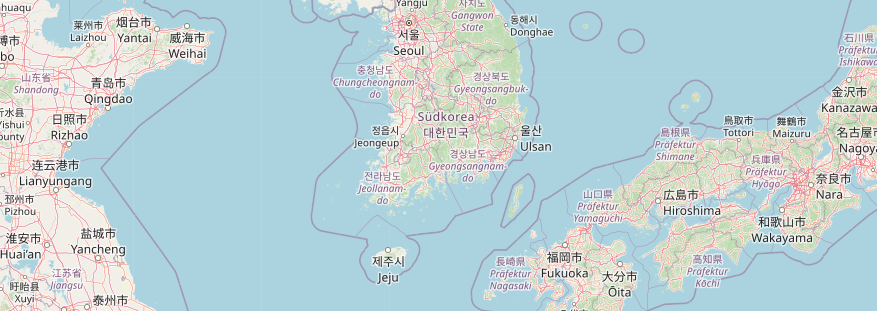

For most westerners the standard tile layer is basically unreadable in regions of the world where Latin script is not the norm. For this reason German style has been using localization for years.

Unfortunately for some people this has been implemented in a somewhat incompatible way regarding standard tile layer which would require changes to the style itself to get localized names.

Fortunately I changed this now and this has hopefully simplified a lot for people using standard tile layer or German style.

Now, if all you want to have is standard tile layer with localized names what you have to do is using openstreetmap-carto-flex-l10n.lua from German style for database import instead of the one from standard tile layer. This will still require the installation of osml10n but once you have done this it is possible to localize names during database import (or update) easily.

Likely this comes especially handy if your target language ist not German but something like English, French oder Spanish and thus just using our tile-servers providing German style is not an option.

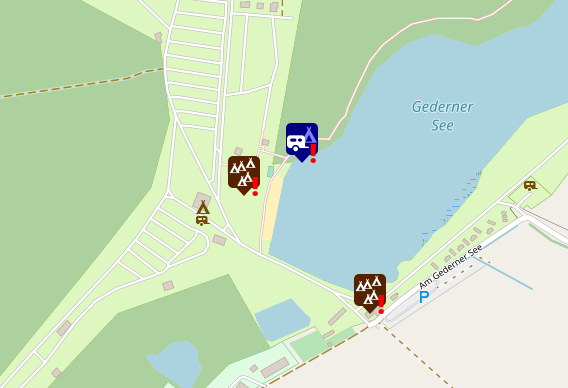

If you also want German road colors in addition to localized names, then just go for German style as before which now also uses the upstream database layout. It is now even possible to render German style without localized names easily, but I doubt that this is something anybody will want to do.

Technically all of this localization stuff works by modifying the name tag of OpenStreetMap objects to the names we want to see in the map during database imports. Probably I should add an option to keep an unmodified version of the name in the hstore columns of the database.

Neueste Kommentare